Have you heard about Metadata? Metadata in short is data about data. For instance, the dataset name, when is the dataset created, what are the columns in the dataset, etc. It is a very important area in Data Management but unfortunately not many organizations paid attention to it. My post here is to bring your attention to metadata again and share with you their importance and how to go about managing it.

Why is Metadata important?

Well, if you are going to work with data, you cannot avoid data errors. Data errors can be very time-consuming because time is spent on investigating and rectification. During the error investigation, we have to trace back the data lineage, i.e. how is the column in the final dataset derived from the raw data downloaded from existing systems. We also have to look at the scripts that are generating all the intermediate and final dataset. You can see it can be very tedious because firstly production codes are never just a few lines of codes. Secondly, if you are working in a large organization, tracing data lineage can be very painful because the column might be derived from various raw and/or intermediate data sources and goes through many levels of calculation. To conclude, data error investigation is very tedious.

Types of Metadata

There are two types of Metadata:

Business

It provides the business context to the data. For instance, in credit card application data for Singapore, the minimum age to hold a credit card is 21 years old. Thus under the Applicant's age, we should not see anyone below the age of 21.

Technical

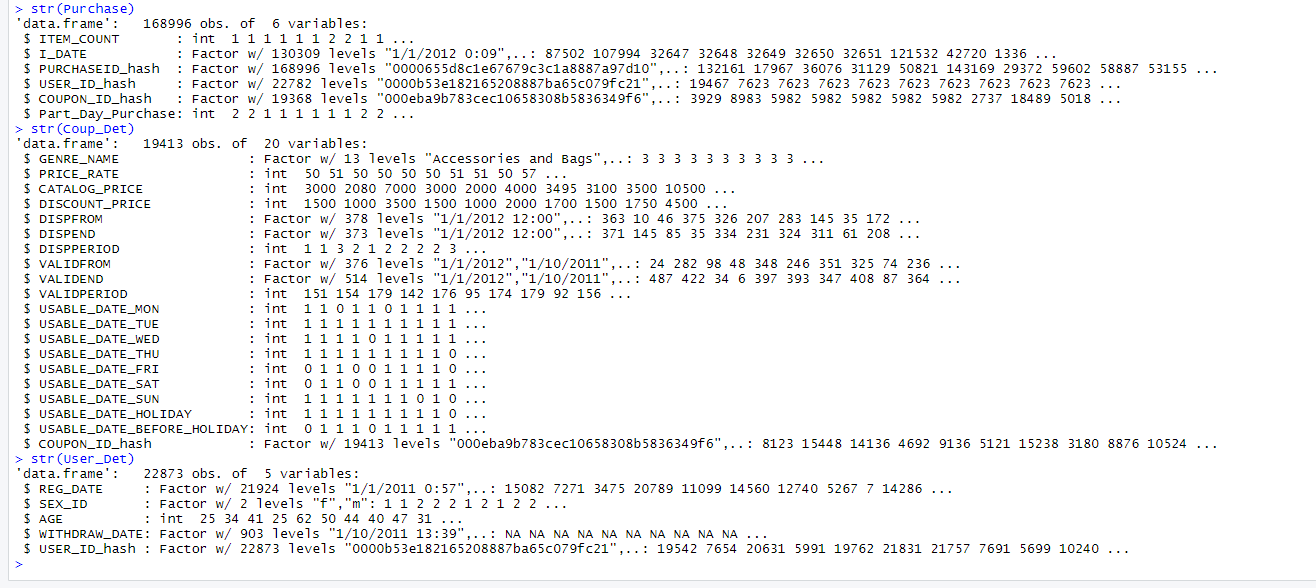

It provides the technical context to the data, allowing the data owners and IT department to maintain data well. An example will be how to name datasets from transaction systems.

Based on the above two types of metadata, you can see that they come from many sources. It can be from policy documents, programming scripts, etc. Unfortunately, I have not come across a single software that can manage them in a single source, thus businesses need to design and execute a metadata management strategy.

Below are a few tips I can share with you, to assist in your journey.

Managing Metadata

As a data scientist, we can manage the time for data error investigation by:

1) Comment your data munging scripts in greater detail. Put in keywords in your comments so you can take advantage of "Ctrl-F" to zoom into the relevant codes.

2) Document the ETL (Extract, Transform & Load) process and metadata. Use diagrams to show the flow of data into the different columns. You might want to use colors to your advantage, to highlight the different source systems and the lines/flows.

3) Collect all your metadata into a single place, where possibles and ensure every staff knows where it is.

4) Continuing from (3) organize your metadata in a way that allows quick retrieval of the relevant metadata for investigation. Use the structured data investigation process to assist with the design of the metadata organization. Remember it is for quick retrieval of relevant metadata.

Keep in mind well-documented metadata can cut down investigation time tremendously. START NOW! If you do not start now, and as your data and organization mature in Data Science, the "monster" may grow too big to be handled.

In Conclusion

Managing your metadata well can allow a business to reap tremendous benefits in the long run. Cutting down data investigation time, allows businesses to access quality insights quickly leading to quicker and better decisions, an advantage that is going to increase value when more competitors get on the Data Science bandwagon.

All the best in your data science journey! If you want to understand more about metadata management, drop me a note at LinkedIn or Twitter. Do share the article if you find it useful. Consider signing up for my newsletter too! Thank you!